About

Is a one of the trusted provider of information technology (IT) services and software solutions. With 19 years of solid experience in Information Technology field, I specialize in delivering high class IT solutions that addresses all client requirements from a simple IT infrastructure to complex data center.Provide high quality services to clients in a sustained manner, thus building long term relationships. Working in a virtual environment to Complex Cloud Infrastructure, I feel that a key component to a successful project is proper and accurate communication.

IT Cloud Architect & Freelancer.

As a IT Consultant and Solution Architect I can alone help you on every chalanges you are facing related to your any projects you working on.

- Birthday: 20 August 1981

- Website: www.premprakash.in

- Phone: +66 096 152 7767

- City: Bangkok, TH

- Age: 41

- Degree: Master

- Email: me@premprakash.in

- Freelance: Available

Good Morning, I am Prem, a commerce graduate from Delhi University, Delhi India. I began my career as a DBA with Retail company and later switched to the role of an Server Analyst with several companies. I have been working in IT Domain since 2002. Over the years I have gained expertise in analyzing the competitive market nature of the company’s clients, identifying business IT requirements, existing solution and enhancements for clients. My proven market analysis and experience has allowed me to achieve long-term success for my company clients which I believe is in line with your company motto “Providing a guaranteed solution to our clients.”

Facts

After moving and leaving my regular companies I started working in various freelance site, after that build long clientele by solving there problems and gain the trust which needs for two way business needs.

Happy Clients

Projects

Hours Of Support

Co-Workers

Skills

I can covered almost all the technologies during my long span of carrier, I can help you on simple to complex configuration, implementation or Troubleshooting in server technologies, Network, Web servers, Databases, Kubernetes, Mail exchanges, security audit etc.

Resume

Motivated individual with a flair to constantly raise the bar to exceed the objectives set; can quickly adapt to organization ethos.Innovative and ability to think laterally; ensure implementation through successful working relationships across the enterprise.

Summary

Prem Prakash

Innovative and deadline-driven IT Solution Architect with 13+ years of experience from development to implementation from initial concept to final, polished deliverable.

- 13/4 Soi 11,Bangkok, TH

- (66) 096-152-7767

- me@premprakash.in

Education

Master of Business Administration & Graphic Design

2015 - 2016

Symbiosis University, Pune, India

To understand business strategies and improving managerial skills I completed MBA program from Symbiosis University Pune, This helps me a lot for understanding and upgrading my current work culture.

Bachelor of Commerce

2010 - 2014

Delhi University, New Delhi, India

I did Commerce graduation and worked as accountant at inception of my professional journey, I always admire Accounting as how things are calculated and Organization works on those statistics.

Professional Experience

Consultant - Cloud Solutions

2021 - Present

Mphasis India Ltd, Pune, India

- Lead in the design, development, and implementation of the technologies, layout, and production communication to stakeholders

- Delegate tasks to the 7 members of the IT Support team and provide counsel on all aspects of the project.

- Supervise the assessment of all IT Resources in order to ensure quality and accuracy of the design

- Oversee the efficient use of production project budgets ranging from $2,000 - $25,000

System Architect

2016 - 2021

Eli Research, Delhi, India

- Developed numerous IT Solutions for Production (Automation, CI CD, Monolithic to Distributed Applications, presentations, and KnowledgeBase Articles).

- Managed up to 5 projects or tasks at a given time while under pressure

- Recommended and consulted with clients on the most appropriate Application design

- Created 4+ Application presentations and proposals a month for clients and account managers

Services

We collaborate with brands and agencies to create memorable experiences. Think of us as more of a creative partner than a resource. This means we have shared perspective on how we can work together to achieve your goals. Basically, your new BFF.

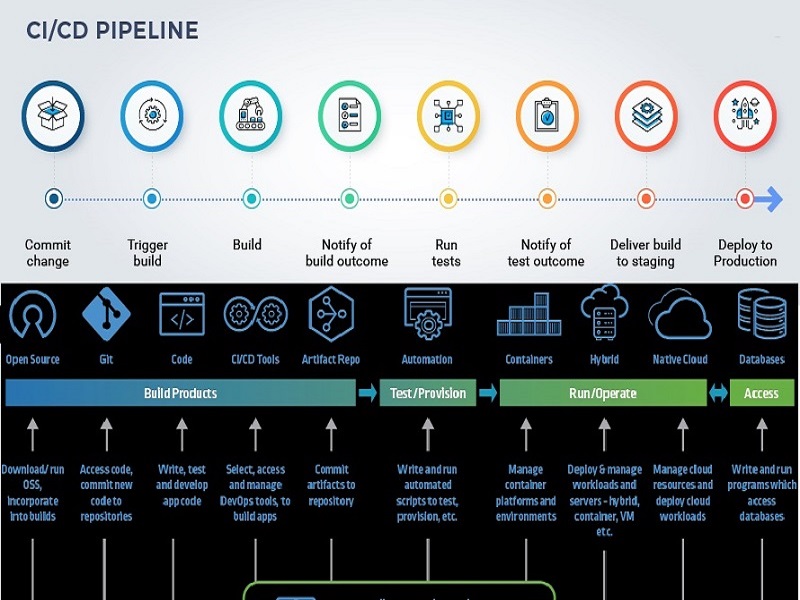

Devops Automation

DevOps automation is the addition of technology that performs tasks with reduced human assistance to processes that facilitate feedback loops between operations and development teams so that iterative updates can be deployed faster to applications in production.

Application Development

Application development is the process of creating a computer program or a set of programs to perform the different tasks that a business requires. From calculating monthly expenses to scheduling sales reports, applications help businesses automate processes and increase efficiency.

Server Technologies

Server technology refers to types of computers within a business that manage and provide access to specific resources for other devices and users. Servers may be dedicated to managing access to files, web content, multimedia content, email, real-time messaging, games, backups, applications, and more.

Database Solutions

The Solution Database is a repository of information which is stored as problems and solutions, and is indexed for immediate retrieval. It also provides a multiple language support.

Web technologies

Web technology refers to the means by which computers communicate with each other using markup languages and multimedia packages. It gives us a way to interact with hosted information, like websites. Web technology involves the use of hypertext markup language (HTML) and cascading style sheets (CSS)

Containerization

Containerization is a type of virtualization in which all the components of an application are bundled into a single container image and can be run in isolated user space on the same shared operating system. Containers are lightweight, portable, and highly conducive to automation.

Testimonials

Words of Appreciation always makes you feel about your work and build confidence to work more hard further, Here are some of few words of appreciation from our clients.

Contact

We are experts in software product development with deep proficiency in offshore-based software development work using Agile methodologies We have been serving global clients for over 20+ years, some of which are working with us from the time of their inception

Location:

13/4 Soi 11, Bangkok, TH 20155

Email:

info@premprakash.in

Call:

+66 096 152 7767